1. What is edge computing?

Edge computing is a data processing model where computation takes place at or near the source of data generation, rather than sending everything to a centralized data center or cloud platform. The term "edge" refers to the geographic or logical position at the periphery of a network, closest to end devices and users.

The core distinction between edge computing and earlier models lies in where processing occurs: instead of all data traveling to a single central hub, edge computing distributes computational capacity across thousands of smaller points placed near the devices. An edge node can be a smart gateway at a factory, a mini server in a building, or even a capable end device itself. Data is filtered, analyzed, and acted upon locally; only the information that truly needs further processing is sent up to the cloud.

2. How does edge computing work?

Edge computing distributes the data processing pipeline across multiple geographic layers. The layer closest to devices handles tasks that require immediate response, while the cloud layer manages long-term storage, deep analytics, and overall orchestration. This model significantly reduces the load on central infrastructure while ensuring near-real-time processing speeds.

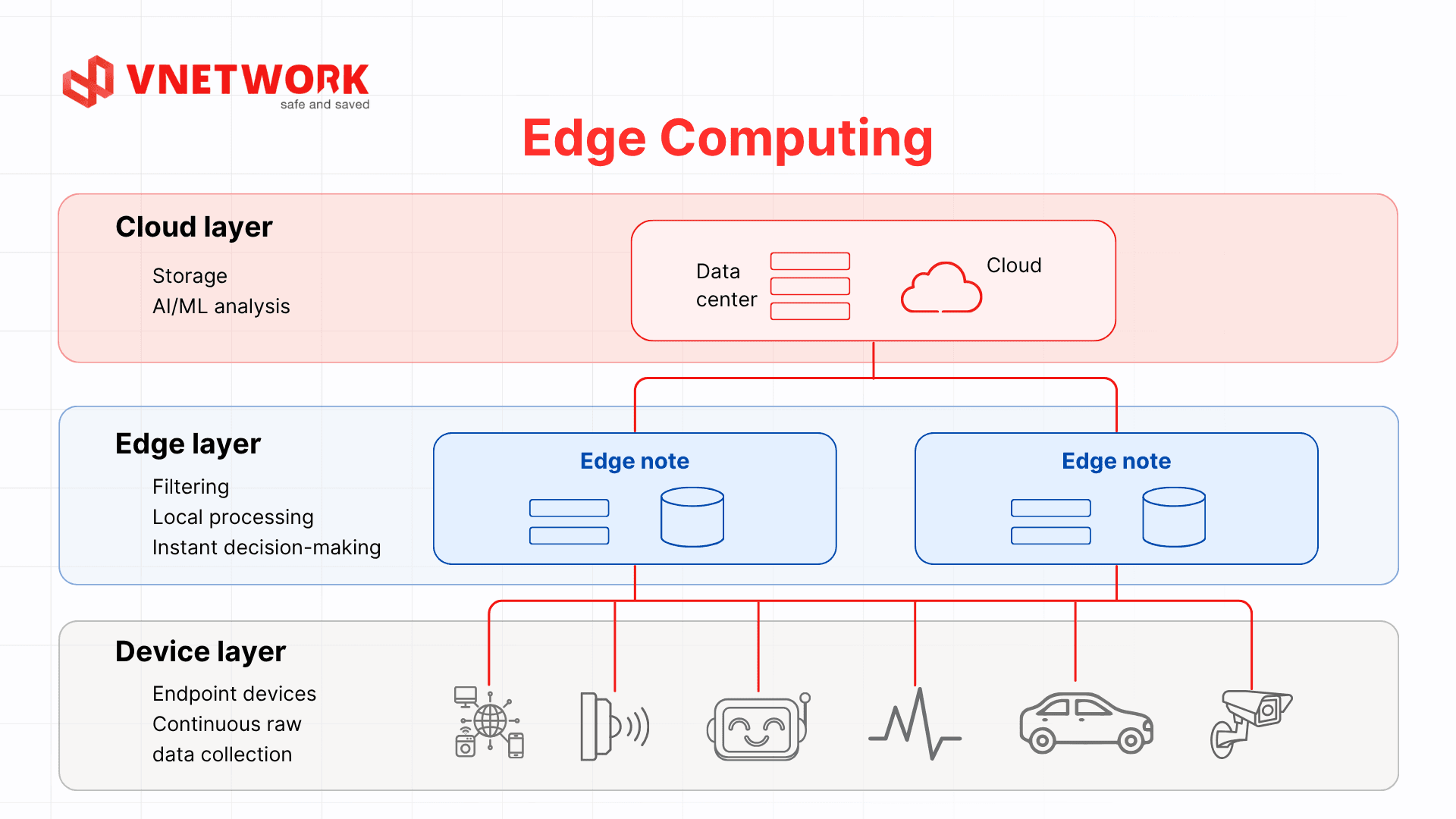

2.1. The three-layer architecture of edge computing

Device layer: Encompasses all end devices that generate data, including IoT sensors, security cameras, industrial machinery, connected vehicles, and wearable medical devices. At this layer, raw data is continuously collected and forwarded to the next layer for preliminary processing.

Edge layer (Edge node): This is the heart of the edge computing architecture. Edge nodes receive data from devices, perform local filtering, aggregation, analysis, and make immediate decisions. An edge node can be located at a factory, a telecommunications tower, a business branch, or any physical location close to the data source. Only truly necessary information is forwarded to the cloud.

Cloud layer: The cloud platform receives pre-processed data from edge nodes. Long-term storage, deep analysis using machine learning, AI model updates, and policy orchestration for the entire edge system all happen here. Because the cloud does not need to handle all raw data, the workload is dramatically reduced compared to traditional centralized computing.

2.2. Data flow from device to edge node

Data moves through four main steps in a typical edge computing system:

- Collection: End devices continuously record real-world data such as temperature, images, GPS coordinates, and biometric signals.

- Filtering and pre-processing: Edge nodes eliminate noisy, duplicate, or irrelevant data on the spot, reducing the volume of data to be transmitted by 70 to 90 percent depending on the scenario.

- Local processing and decision-making: Edge nodes perform analysis and trigger immediate responses such as alerts, machine adjustments, and status updates without waiting for a response from the cloud.

- Synchronization to the cloud: Processed results, aggregated data, and exception cases requiring deeper analysis are sent to the cloud platform periodically or event-driven.

3. Comparing edge computing, cloud computing, and fog computing

Edge computing, cloud computing, and fog computing are often mentioned together but differ in scope and mechanism. Understanding these differences helps organizations choose the right model or combine all three to optimize their overall infrastructure.

Cloud computing concentrates all computational resources at large-scale data centers, delivering high processing power and virtually unlimited storage, but it depends entirely on network connectivity and introduces latency due to physical distance. Fog computing is a distributed intermediate layer between devices and the cloud, extending processing capabilities across multiple infrastructure tiers such as smart gateways and switches. Edge computing is more specific: processing occurs directly at the device or at a node placed as close to the device as possible, responding within a few milliseconds without passing through any intermediate layer. In other words, edge computing is a subset of fog computing, focused on the layer closest to the device.

The table below summarizes the key comparison criteria across the three models. Refer to the edge computing vs cloud computing comparison article for a deeper analysis.

| Criteria | Edge computing | Fog computing | Cloud computing |

| Latency | Extremely low (a few milliseconds) | Low to medium, depending on fog layer position | Higher, depending on distance to data center |

| Deployment location | At the device or node placed nearest the device: factory floor, field sites, vehicles | Intermediate distributed layer: gateways, switches, local servers in buildings or campuses | Centralized at large-scale data centers, typically far from end users |

| Data processing | Local, immediate, suitable for real-time tasks | Aggregates and processes data from multiple devices before sending to cloud | Centralized, suited for large-scale data analysis, machine learning, long-term storage |

| Bandwidth | Lowest consumption, only sends results or necessary data | Medium; fog node aggregates and compresses before uploading to cloud | High consumption when large volumes of raw data must be continuously sent |

| Security | Sensitive data processed on-site, lower risk of leakage during transmission | Data controlled within internal infrastructure scope before going to cloud | Centralized, easier policy management, but data must leave the device |

| Best suited for | Industrial IoT, autonomous vehicles, real-time healthcare, applications requiring instant response | Smart building systems, university campuses, industrial parks with many IoT devices | Big data analytics, storage, AI model training, applications that do not require instant response |

In practice, most modern systems deploy a hybrid model across all three layers: edge nodes handle immediate processing at the device level, fog nodes aggregate and coordinate within internal infrastructure, while the cloud manages long-term storage, AI model training, and global policy orchestration. This three-layer architecture leverages the strengths of each model to achieve optimal performance and flexibility.

4. Benefits of edge computing for businesses

Edge computing delivers clear competitive advantages in four key areas: operational performance, infrastructure costs, continuous availability, and data security compliance. Below is a detailed analysis of each.

4.1. Minimizing latency

When data is processed at the edge node rather than traveling across a network to a central facility, response times drop to a few milliseconds. This advantage is critical for applications that demand instant response: emergency braking systems in autonomous vehicles, ICU patient monitoring equipment, industrial robots, and defect detection systems on production lines. A delay of a few hundred milliseconds in these scenarios can lead to serious consequences.

4.2. Saving bandwidth and transmission costs

Rather than sending all raw data to the cloud, edge nodes filter and forward only what is truly necessary. A surveillance camera, for example, does not need to continuously stream full video but only sends an alert when an anomaly is detected. This significantly reduces transmission traffic, lowering bandwidth costs and relieving the load on central network infrastructure.

4.3. Ensuring continuous operation during connectivity loss

Systems that depend entirely on the cloud become inoperative when the connection to the central facility is disrupted. Edge computing allows edge nodes to continue processing, making decisions, and maintaining local operations even when Internet connectivity or the link to the data center is lost. This is a mandatory requirement in critical infrastructure environments such as manufacturing plants, power grids, and healthcare facilities in remote areas.

4.4. On-site data security and compliance

Many industries require that sensitive data never leave a specific geographic boundary or internal infrastructure, particularly in healthcare, finance, and government. Edge computing meets this requirement by processing and storing data on-site. Patient data, financial transactions, and personally identifiable information can be analyzed without leaving the device, minimizing leakage risk during transmission and supporting compliance with data protection regulations.

In summary, the four core benefits of edge computing are:

- Extremely low latency and instant response, suited for real-time applications

- Bandwidth savings through data filtering at the source

- Continuous operation even when connectivity to the central facility is lost

- On-site protection of sensitive data, supporting regulatory compliance

5. Real-world applications of edge computing

Edge computing is being widely adopted across many sectors, from industrial manufacturing to healthcare, transportation, and digital content distribution. Below are the most notable application groups today.

5.1. IoT and smart manufacturing

In industrial manufacturing environments, thousands of IoT sensors continuously collect data from machinery, production lines, and working conditions around the clock. Edge nodes placed directly at the factory process sensor data in real time to detect early signs of impending equipment failure (predictive maintenance), automatically adjust production line parameters, and perform quality control as products are made. The result is reduced unplanned downtime and increased productivity without the expense of expanding network infrastructure.

5.2. Autonomous vehicles and intelligent transportation

Autonomous vehicles must process data from dozens of radar, lidar, and camera sensors within a few milliseconds to make safe control decisions. The entire process of obstacle recognition, motion prediction, and steering must occur locally on the vehicle; it cannot wait for data to be sent to a central server and a response returned. Smart traffic systems at intersections also leverage edge computing to coordinate signal timing based on actual traffic volumes in real time.

5.3. Healthcare and remote patient monitoring

Next-generation wearable devices and medical equipment can continuously monitor heart rate, blood pressure, blood oxygen levels, and many other biometric indicators. Edge computing enables instant analysis directly on the device or at a gateway in the patient's room, triggering alerts as soon as an anomaly is detected without requiring a stable connection to a central server. In robot-assisted remote surgery, latency must be held to milliseconds to ensure patient safety.

5.4. Retail, e-commerce, and customer experience

Smart camera systems in stores can analyze customer behavior, track inventory, and detect fraud directly at edge nodes installed on the premises. Self-service kiosks process payments and facial recognition locally, ensuring a smooth experience even when network connectivity is unstable. For large-scale e-commerce, edge computing supports content personalization and product recommendations with low latency, right within the browsing session.

5.5. Video streaming and content delivery

In digital content delivery, edge computing forms the foundation of modern CDN infrastructure. Static content and video are cached at distributed edge nodes close to end users, reducing page load latency and improving the quality of online video playback. Load balancing mechanisms at the edge intelligently distribute user traffic, maintaining stable performance even during traffic spikes such as live broadcasts or major product launches.

6. What types of businesses are suited for edge computing?

Edge computing is suitable for any organization that needs to process data in real time, operate systems in environments with unstable connectivity, or has requirements to store sensitive data on-site. It is not exclusive to large enterprises; even small and medium businesses undergoing digital transformation can access edge computing through pre-packaged solutions or managed edge services.

- Manufacturing and industry: Companies operating automated production lines, industrial robots, or dense IoT sensor networks need on-site data processing to ensure workplace safety and predictive maintenance.

- Healthcare: Hospitals, clinics, and medical device providers need real-time patient monitoring and must protect health data in compliance with legal regulations.

- Logistics and transportation: Organizations managing vehicle fleets, cold supply chains, or smart ports need immediate on-site tracking data processing and anomaly alerts.

- Retail and e-commerce: Retail chains deploying smart cameras, self-service kiosks, or automated inventory management systems benefit from the local processing capabilities of edge computing.

- Telecommunications and media: Network operators and content distributors need edge infrastructure close to users to deliver high-quality streaming and consistent experiences nationwide.

To determine the appropriate level of investment in edge computing, businesses should evaluate three key factors: the latency requirements of their applications, the volume and frequency of data that needs to be processed at the source, and the sensitivity of their data under current regulations. Learn more about data center infrastructure to understand how edge nodes complement an existing data center architecture.

7. The development trajectory of edge computing

Edge computing is entering a period of strong acceleration driven by the convergence of several foundational technologies. Understanding these trends helps businesses plan long-term infrastructure and seize opportunities before competition intensifies.

7.1. Edge computing and 5G: two technologies that drive each other forward

5G and edge computing share a symbiotic relationship: 5G provides enormous bandwidth and ultra-low latency at the wireless transmission layer, while edge computing processes the vast volumes of data that 5G generates right at the source without sending everything to the cloud. The Multi-access Edge Computing (MEC) model is being deployed by telecommunications carriers directly at 5G base stations, placing computational capacity as close to users as possible. This combination unlocks applications that were previously not feasible, such as real-time augmented reality (AR), remote robot control, and city-scale autonomous vehicles.

7.2. Edge AI: artificial intelligence running directly on devices

The most prominent trend today is the migration of AI models from the cloud to edge nodes and end devices. Next-generation dedicated AI chips make it possible to run image recognition, language processing, and sensor data analysis tasks directly on compact devices with low power consumption. Edge AI completely eliminates dependence on cloud connectivity for inference tasks, enabling instant system responses and keeping sensitive data from leaving the device.

7.3. Market growth and deployment pressure

The global edge computing market is in a phase of strong growth, driven by IoT demand, Edge AI, and rapidly expanding 5G infrastructure. Increasingly strict data localization regulations in many countries are also pushing businesses to consider on-site data processing models rather than relying entirely on centralized cloud. In this context, businesses that invest early in edge infrastructure will have a clear competitive advantage as real-time processing becomes the industry standard.

8. VNCDN: the perfect content acceleration complement to edge computing

CDN and edge computing share the same foundational philosophy: bringing processing and delivery closer to end users rather than depending on a single central point. If edge computing optimizes data processing at the source, CDN completes that loop by ensuring digital content reaches users as quickly as possible. These two technologies do not replace each other; they complement each other within a comprehensive distributed infrastructure.

VNETWORK developed VNCDN as a smart content acceleration and delivery solution, operating a globally distributed PoP (Point of Presence) network deployed across all major ISPs in Vietnam. When a business's edge computing system processes and creates content at distributed nodes, VNCDN takes on the role of delivering that content to end users with the lowest possible latency.

VNCDN delivers five core benefits for businesses:

- Speed optimization and latency reduction: Content is cached at PoPs close to users, significantly reducing page load times. Supporting next-generation HTTP/3 and QUIC protocols, VNCDN maintains stable transfer speeds even when users access from mobile connections or areas with variable network conditions.

- Extreme traffic capacity: Intelligent traffic distribution across the entire PoP network allows VNCDN to handle traffic spike events, from live broadcasts with millions of viewers to large-scale e-commerce flash sales, without requiring origin server infrastructure upgrades.

- High availability: The distributed architecture of VNCDN eliminates single points of failure. When one PoP encounters an issue, traffic is automatically rerouted to nearby PoPs, ensuring uninterrupted service. Smart caching also allows content to be served even when the origin server is temporarily unavailable.

- Enhanced security: VNCDN integrates Layer 3 and Layer 4 DDoS protection, Rate Limiting, Token Access, and SSL, protecting the entire content delivery pipeline from common attacks without impacting delivery speeds for legitimate users.

- Cost optimization: VNCDN's smart caching mechanism significantly reduces the number of direct requests to the origin server, lowering bandwidth usage and the operational costs of the underlying infrastructure. Businesses can scale to serve users nationally and internationally without proportionally increasing infrastructure costs.

VNCDN is trusted by media organizations, e-commerce businesses, and financial institutions with high requirements for speed, stability, and security in digital content delivery across Vietnam and the region.

9. Conclusion

Edge computing is reshaping how digital infrastructure is built and operated in the era of IoT and real-time data. By bringing processing capacity closer to devices and end users, edge computing resolves the inherent limitations of centralized cloud models in terms of latency, bandwidth, and continuity. From smart factories and autonomous vehicles to remote healthcare and digital content delivery, edge computing is becoming an indispensable component of modern enterprise infrastructure.

FAQ - Frequently asked questions about edge computing

1. Will edge computing completely replace cloud computing?

No. Edge computing and cloud computing are two complementary models, not substitutes. Edge computing handles tasks that require instant response and sensitive data on-site, while cloud computing manages long-term storage, big data analytics, and AI model training. Most modern systems combine both to leverage the unique strengths of each architecture.

2. How is edge computing different from fog computing?

Fog computing is a broader concept that describes all distributed computing layers between end devices and the cloud, encompassing multiple intermediate tiers such as gateways, switches, and local servers. Edge computing is more specific, referring to processing that occurs at or very close to the device generating the data. In other words, edge computing is a subset of fog computing, focused on the processing layer closest to the device.

3. Do small businesses need to implement edge computing?

It depends on actual needs. If a small business operates surveillance cameras, IoT devices, or applications that require fast response, edge computing offers clear benefits. Many edge computing solutions are now pre-packaged at accessible price points, without requiring a specialized technical team. For businesses that only need to run a website or standard office applications, cloud or CDN solutions are the more cost-effective choice.

4. How is security managed at edge nodes?

Securing edge nodes requires a multi-layered strategy because nodes are deployed in dispersed physical locations. Common measures include on-site data encryption and encryption in transit, strong authentication for all devices connecting to the edge network, regular firmware and security software updates, and applying a Zero Trust model in which no device or user is trusted by default regardless of whether they are inside or outside the internal network.

5. Is VNCDN related to edge computing?

VNCDN applies the core principles of a distributed architecture similar to edge computing: bringing content as close to end users as possible through a distributed PoP network. Rather than serving content from a single central server, VNCDN caches and distributes content from points of presence located in Vietnam and globally, reducing latency and improving page load speeds in line with the edge computing philosophy applied to digital content delivery.